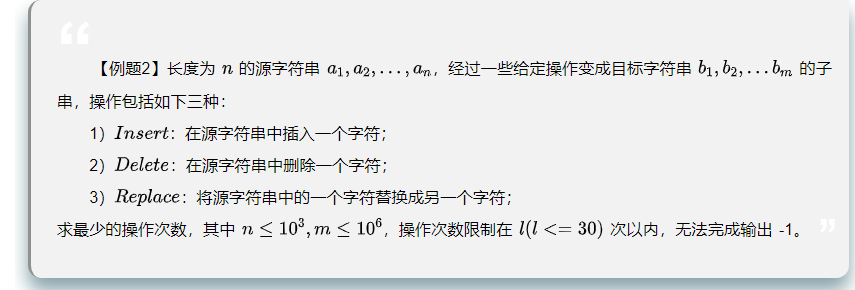

题目描述

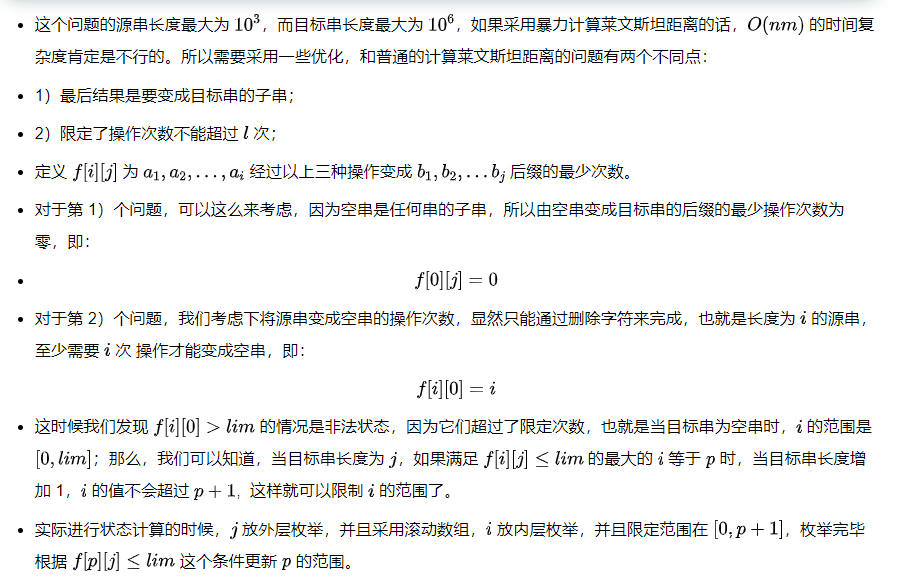

算法1

#include<iostream>

#include<cstdio>

#include<cstdlib>

#include<ctime>

#include<cmath>

#include<string>

#include<cstring>

#include<bitset>

#include<vector>

#include<queue>

#include<stack>

#include<set>

#include<map>

#include<iomanip>

#include<algorithm>

#define dbgfull(x) cerr << #x << " = " << x << " (line " << __LINE__ << ")"<<endl;

#define IOS ios::sync_with_stdio(0); cin.tie(0); cout.tie(0);

#define dbg(x) cerr << #x " = " << (x) << endl

#define endl "\n"

#define int long long

#define PI acos(-1)

//CLOCKS_PER_SEC clock()函数每秒执行次数

using namespace std;

const int INF = 0x3f3f3f3f;

const int N = 1e6+5,M = 1005;

int mod = 1e9 +7;

int n,m,lim,S,T;

char a[N],b[N];

int f[2][M];

void solve(){

cin >> b + 1 >> lim;

n = strlen(a + 1),m = strlen(b + 1);

memset(f,0x3f,sizeof(f));

for(int i = 0 ; i <= m ; ++i) f[0][i] = i;

int ans = INF,p = min(m - 1,lim - 1);

for(int i = 1 ; i <= n ; ++i){

f[i & 1][0] = 0;

int last = 0;

for(int j = 1 ; j <= p + 1; ++j){

f[i & 1][j] = min(f[i & 1][j - 1],f[i - 1 & 1][j]) + 1;

f[i & 1][j] = min(f[i & 1][j],f[i - 1 & 1][j - 1] + (a[i] != b[j]));

if(f[i & 1][j] <= lim) last = j;

}

if(p + 1 == m) ans = min(ans,f[i & 1][m]);

p = min(last,m - 1);

}

if(ans > lim) cout << "-1" << endl;

else cout << ans << endl;

}

signed main(){

IOS;

while(cin >> a + 1)

solve();

return 0;

}